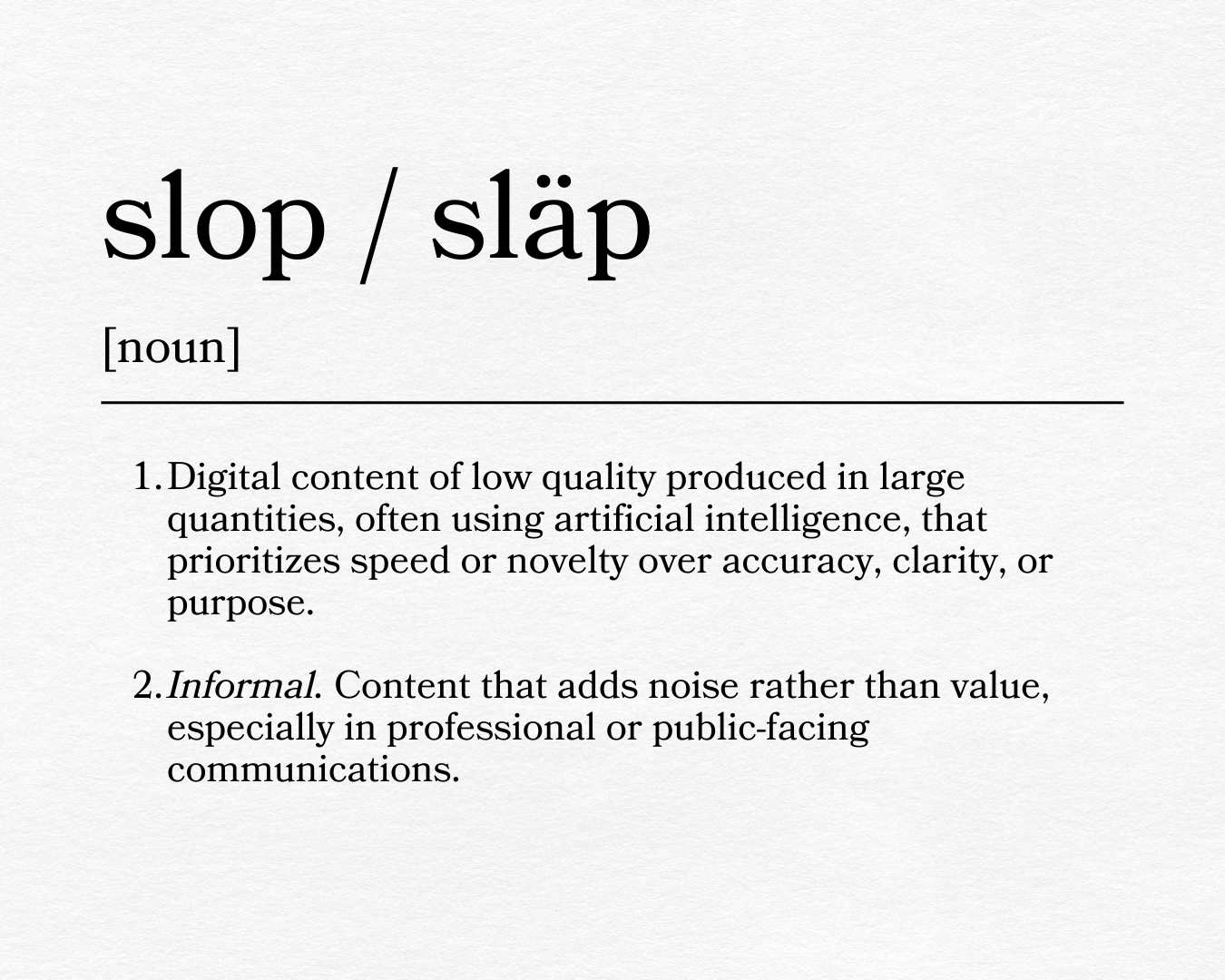

There’s a low-grade cultural frustration growing around the quantity of low-quality digital content produced at scale by artificial intelligence. Every news source has put out a quiz, “Can You Spot the AI?” So it comes as no surprise that “slop,” as it pertains to the daily deluge of hastily created content that offers little value (see also, Oxford University Press’ 2025 Word of the Year, “rage bait”), has entered the cultural conversation.

You’ve worked too hard to build trust with your community to let AI slop erode it all away in the blink of an eye. We’re in a human-first industry. We operate in a high-trust environment. Families, staff, and communities are relying on you to be clear, accurate, accessible, and, get this, human.

The answer is not “everywhere,” it’s “somewhere.” Knowing the difference matters.

AI can be incredibly useful when the goal is efficiency and organization. When ChatGPT launched in November 2022 (yes, it’s been that long), the early advice was to treat it like an intern. It’s great for taking a meeting transcript and turning it into minutes, complete with action items and next steps. Need a quick analysis of your GA4 data? Google Gemini to the rescue. Need a quick first draft in a crisis? A well-structured prompt template (or custom GPT, if you’ve built one) will get you started.

Used this way, AI doesn’t replace judgment—it supports it.

But we’ve all seen the generic phrases ChatGPT spits out: “You’re thinking about this exactly the right way,” or “That’s a really great insight that gets at the heart of the issue.”

The distinction between work that supports your organization and AI slop comes from the care you put into your structured inputs, clear constraints, and human decision-making at the end.

Let’s say you have three goals today:

- Organize complex information handed to you from four departments on their contributions to the same initiative

- Reduce the friction happening in internal workflows so that you can get the pertinent information out to your community

- Help leaders think through the plethora of scenarios that might happen as a result of this initiative

These uses are fundamentally different from asking AI to generate a polished, public-facing article, graphic, or video and clicking “post.”

“AI works best when it’s given a job, not a voice,” says Andrew A. Hagen, Integrated Communications Coordinator. “It’s great at helping you move from chaos to clarity. But even if you gave it access to all of your climate surveys, it still wouldn’t understand your community or your values—this still has to come from people.”

In other words, AI can help schools move faster without sacrificing trust externally.

When speed undermines trust

We’ve all experienced it. A principal has an idea in July for something new to start in August. The board waits until the last minute to approve a referendum question. And this is to say nothing of the regular crisis communications, from student fights to buildings literally on fire to mid-year staff resignations.

Your community is looking for more than information. They’re looking for reassurances that someone is thoughtfully taking accountability for the messaging.

This is where slop tends to show up—not as obvious errors, but as content that exists without serving a clear purpose.

Images, video, and audio. Oh, my!

There’s a reason Buster Keaton’s stunts still captivate audiences a century later. We know a real person risked something real. No matter how technically flawless modern CGI becomes, it just can’t replicate that feeling of a 2-ton wall collapsing around a man who hit his precise mark.

AI-generated visuals can be helpful for conceptual work or internal planning. But when they’re used to represent real students or staff — or, worse yet, misrepresent your district in some way — they can feel artificial and misleading. In a high-trust environment, authenticity matters.

There are plenty of tools to help with editing, captioning, and repurposing content—particularly for accessibility. But a fully automated or overly polished video will fall flat when the message depends on empathy, clarity, or leadership’s presence.

Synthetic video and voices may save time, but they often lack the warmth and accountability that families expect and deserve—especially during times of change, uncertainty, or crisis.

Nobody wants to see a perfectly polished AI-generated student smiling through a calculus lesson. They want to see real students, in a real place, learning real lessons, to help them in their real life in school and beyond.

Nobody wants to hear that voice you hear when you call your bank and get put on an interminable hold that says, “Your call is important to us. Have you checked our website to accomplish your task?” They want to hear your superintendent say, “We see you. We care about you. This is what you need to know. This is how it’s going to impact you. And we’re here for you.”

Honesty: We adhere to the highest standards of accuracy and truth in advancing the interests of those we represent and in communicating with the public.

– Public Relations Society of America, Code of Ethics

Efficiency vs. substitution

“Effectiveness is dependent upon integrity and regard for ideals of the profession.”

— NSPRA Code of Ethics

There is a meaningful difference between using AI to support communications and using it to substitute for it.

It helps teams keep up. It can make accessibility easier to achieve.

What it cannot do is replace institutional knowledge, community awareness, or professional judgement.

Less AI slop, more substance

AI does have a role to play in your work. Use it thoughtfully. Let it support your team that’s stretched all too thin, and allow it to help move important work forward.

Schools are, and always will be, a human-first industry. Trust is built in ways that can’t be automated.

Don’t publish content for the sake of publishing content. Communicate with purpose. Lean into your expertise. You’ve got this. And AI can help.